-

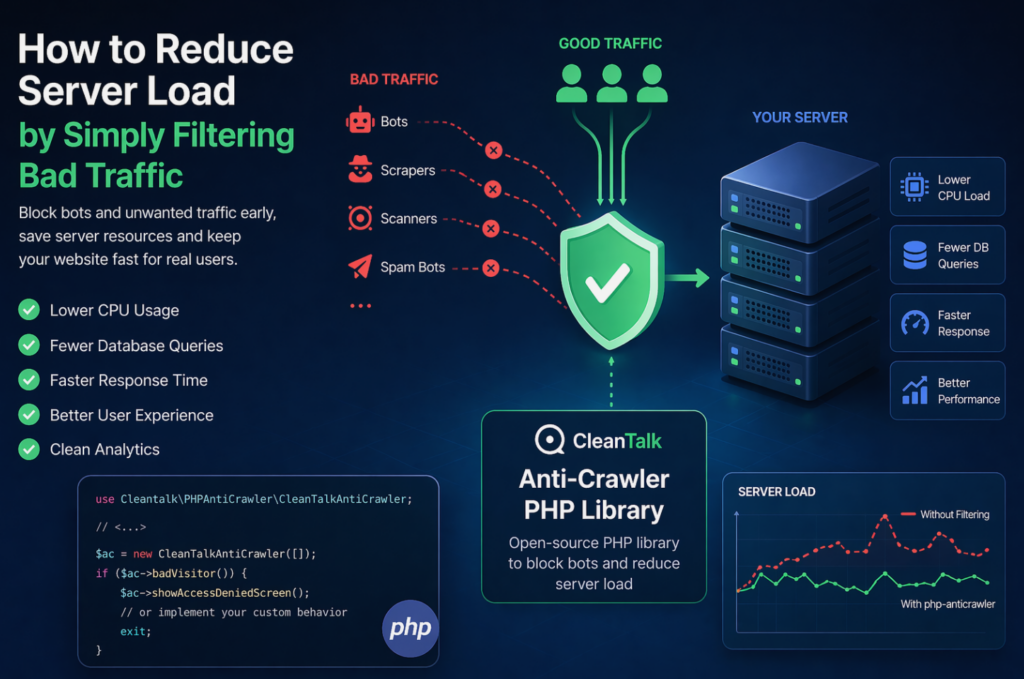

How to Reduce Server Load by Simply Filtering Bad Traffic

When a website starts slowing down, many teams immediately think about scaling infrastructure: adding CPU, RAM, more servers, or optimizing the database. In reality, a significant part of server load is often caused not by real users, but by automated traffic — bots, scrapers, vulnerability scanners, spam robots, and aggressive crawlers. These requests may continuously

FEEDBACK LOG

The Latest

Sorry, but nothing was found. Please try a search with different keywords.